One-Stop Solution Provider

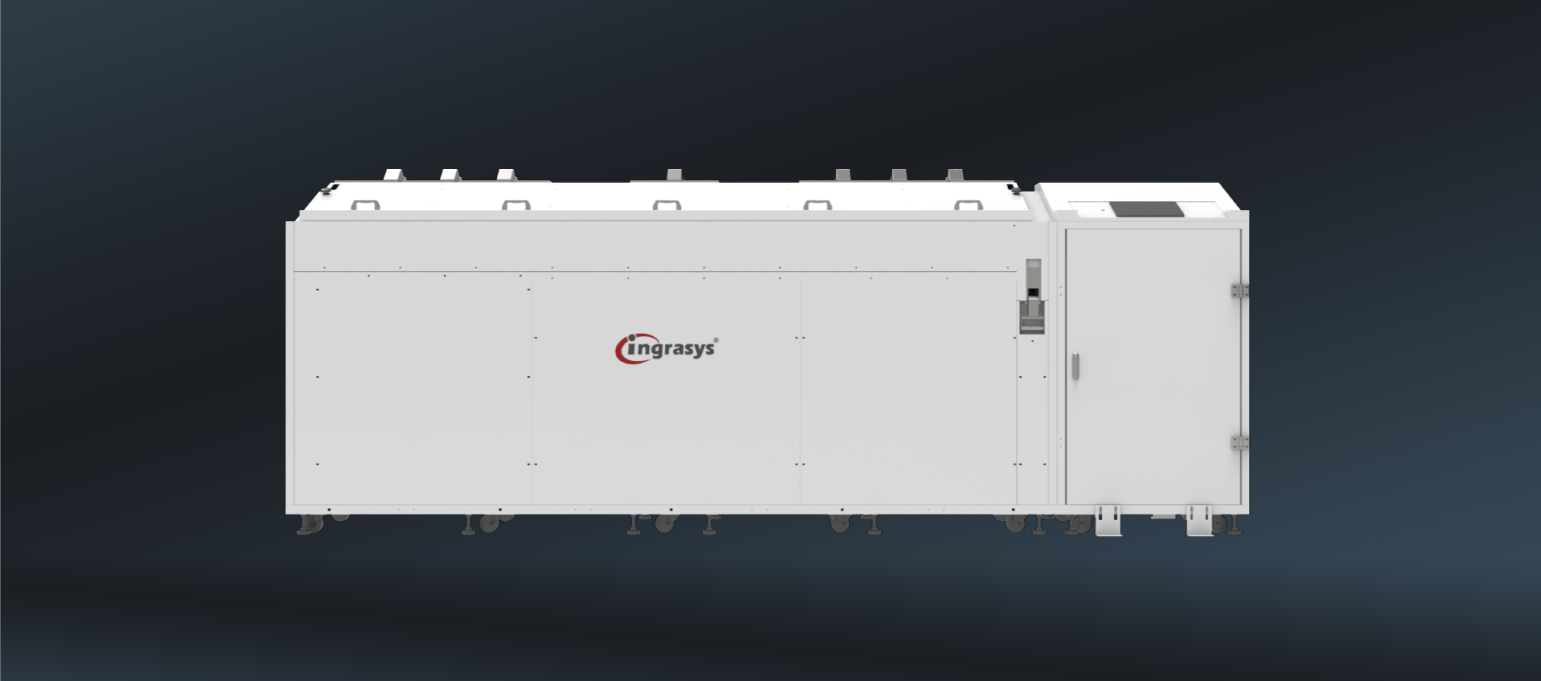

With continuous advancements in semiconductor technology, the computational power of chips is rapidly increasing, resulting in greater heat generation. Traditional air cooling methods are becoming less effective at dissipating heat. To address this, Ingrasys established a 'High-Efficiency Cooling Laboratory’ years ago, offering a comprehensive range of customized rack-level liquid and immersion cooling solutions for various scenarios. These solutions not only manage the increased computational demands but also reduce energy consumption and operational costs, making data centers greener and more efficiently.

Features

High Power Density

Lower PUE

Quieter Operation

Enhanced Performance

Liquid Cooling Solution Options

Rack-Level Liquid Cooling

__26D29U7XEB.png)